Background

In March, we documented how we got 51-54 tokens/sec out of the NVIDIA DGX Spark by combining a Mixture-of-Experts model (Qwen3-30B-A3B), FP8 quantization, and the Avarok community Docker image to work around Blackwell SM 12.1 support gaps.

That stack was stable and served our general-purpose inference well. But when we installed a DGX Spark at the XRPL Commons office, we wanted more: native tool calling and reasoning mode — features that turn an LLM from a text generator into an agent.

Qwen3 can do tool calling with the right prompting, but we wanted to evaluate Gemma 4, which Google released in April with first-class function calling and a configurable thinking mode built for agentic workflows.

This post covers:

- Why we picked Gemma 4 for the office Spark

- What had to change in the deployment

- The throughput we actually measured

Why Gemma 4

Google released Gemma 4 in early April 2026 with an MoE variant that fits the DGX Spark sweet spot:

- gemma-4-26B-A4B — 26B total parameters, 4B active per token

- 256K context (we run with 131K to fit KV cache)

- Native function calling with a dedicated tool-call parser

- Configurable thinking mode (reasoning parser) — the model can emit a private thought process before responding

- Multimodal — text + image input (plus audio on smaller variants)

- 140+ languages supported

- Apache 2.0 license — no strings attached

For our use case — an agent calling local XRPL tools, drafting documents, coordinating with team members — native tool calling is the big unlock. You can pass an OpenAI-style tools array to the chat completions API and the model emits structured tool_calls back. No prompting gymnastics, no custom parsing.

The MoE architecture keeps us on the right side of the Spark's 273 GB/s memory bandwidth wall. 4B active parameters is slightly more than Qwen3's 3B, so we expected slightly lower single-stream throughput. In practice we measured 51 tok/s at steady state — essentially the theoretical ceiling (273 GB/s ÷ ~4B active weights @ FP4 ≈ 50 tok/s). The trade for slightly fewer tokens per second: native tool calling, reasoning mode, 131K context, and multimodal input.

The Model Choice: NVFP4

We picked the bg-digitalservices/Gemma-4-26B-A4B-it-NVFP4 quantization. A few reasons:

- NVFP4 (NVIDIA's FP4 format) is designed specifically for Blackwell's fifth-gen Tensor Cores

- The GB10 supports NVFP4 natively in hardware

- Quality degradation vs bf16 is reportedly minimal for instruction-tuned models

- It's the smallest on-disk footprint, giving us more KV cache headroom

For the inference engine, we moved off the Avarok image for this model. Avarok is excellent for Qwen-style models, but Gemma 4 support is still landing upstream. We switched to the official vLLM image vllm/vllm-openai:gemma4-cu130 — a Gemma-4-specific build from the vLLM team with CUDA 13.0.

What Broke (and How We Fixed It)

The gemma4.py Bug

The stock vLLM Gemma 4 model executor crashed on load with our NVFP4 checkpoint. We had to mount a patched gemma4.py into the container to replace the built-in one:

-v /home/$USER/vllm/gemma4_patched.py:/usr/local/lib/python3.12/dist-packages/vllm/model_executor/models/gemma4.py:ro

The patch is ~50KB of Python — mostly adjustments to how NVFP4 weights are loaded into the fused MoE layers.

NVFP4 MoE Backend Selection

The default MoE backend for NVFP4 on GB10 can trigger a known vLLM crash. Explicitly selecting the Marlinbackend avoids it:

--moe-backend marlin

Marlin is a CUTLASS-based INT4/FP4 GEMM kernel that's mature and fast on Blackwell.

Heterogeneous Head Dimensions

Gemma 4 uses different attention head dimensions for local vs global attention (256 vs 512). vLLM automatically detects this and forces the TRITON_ATTN backend to avoid mixed-backend numerical divergence. Nothing for us to configure — it just works — but worth understanding why Flash Attention isn't in play here.

KV Cache Memory

We enable --kv-cache-dtype fp8 to halve KV cache memory. With 131K context and 16 concurrent sequences, this makes the difference between fitting in 128GB and OOMing. Quality impact is negligible for our workloads.

Docker Runtime Gotcha

On the office Spark, the nvidia Docker runtime wasn't registered (only the container toolkit was installed). The fix was to use --gpus all instead of --runtime nvidia — the older and more portable flag. Worth knowing if you see unknown or invalid runtime name: nvidia.

The Full Launch Command

docker run -d \

--name vllm-avarok \

--gpus all \

--shm-size=16g \

--restart unless-stopped \

-p 8000:8888 \

-v /home/$USER/.cache/huggingface:/root/.cache/huggingface \

-v /home/$USER/vllm/gemma4_patched.py:/usr/local/lib/python3.12/dist-packages/vllm/model_executor/models/gemma4.py:ro \

vllm/vllm-openai:gemma4-cu130 \

--model bg-digitalservices/Gemma-4-26B-A4B-it-NVFP4 \

--served-model-name google/gemma-4-26B-A4B-it \

--host 0.0.0.0 \

--port 8888 \

--quantization modelopt \

--moe-backend marlin \

--kv-cache-dtype fp8 \

--enable-prefix-caching \

--enable-chunked-prefill \

--max-model-len 131072 \

--gpu-memory-utilization 0.85 \

--max-num-seqs 16 \

--enable-auto-tool-choice \

--tool-call-parser gemma4 \

--reasoning-parser gemma4

A few flags to highlight:

--served-model-name google/gemma-4-26B-A4B-it--enable-auto-tool-choice + --tool-call-parser gemma4--reasoning-parser gemma4--enable-prefix-caching + --enable-chunked-prefill--max-num-seqs 16

First boot takes 10-20 minutes (model download + load + CUDA graph capture). Subsequent restarts take ~3 minutes.

What Tool Calling Unlocks

With Gemma 4 running, we can now pass tool schemas directly to the API:

from openai import OpenAI

client = OpenAI(base_url="http://spark:8000/v1", api_key="unused")

tools = [{

"type": "function",

"function": {

"name": "get_xrpl_account_balance",

"description": "Fetch the XRP balance for an XRPL account",

"parameters": {

"type": "object",

"properties": {

"address": {"type": "string", "description": "Classic XRPL address starting with r"}

},

"required": ["address"]

}

}

}]

response = client.chat.completions.create(

model="google/gemma-4-26B-A4B-it",

messages=[{"role": "user", "content": "How much XRP does rMCU4... hold?"}],

tools=tools,

tool_choice="auto",

)

# response.choices[0].message.tool_calls -> [ChatCompletionMessageToolCall(...)]

This is the foundation for agentic workflows that previously required prompt engineering or separate function-calling layers (like LiteLLM's function-call emulation). With Gemma 4, the model speaks tool calls natively.

The reasoning mode is similarly useful. For complex queries, the model emits a private thinking trace before its final answer — we can log it for debugging, show it to users for transparency, or strip it entirely. All via the --reasoning-parser flag.

The Benchmarks

We ran a proper benchmark suite from the Spark itself (not over the network, to remove noise): throughput at 100/500/2000-token outputs, time-to-first-token, concurrency scaling, prefix-cache hit/miss, long-context prefill, and tool-calling overhead.

Single-stream throughput

The short-generation number is lower only because warmup dominates. Anything over ~200 tokens hits the steady-state ~50 tok/s. That matches the theoretical ceiling for 4B active parameters at FP4 on 273 GB/s bandwidth.

Concurrency — the big win for agentic workflows

This is where the Spark shines. vLLM's continuous batching + Marlin NVFP4 MoE kernel keeps per-request throughput high even as you add parallel clients:

5.4× aggregate throughput at 8 concurrent clients. For a team of devs running agents in parallel, or a single agent making parallel tool-call decisions, this is huge.

Prefix caching — massive for agent loops

Agent workflows resubmit the same system prompt, same tool schema, same conversation history over and over. Measured on an 8K-token prefix:

8.2× speedup. If you're building anything where the same prefix repeats — ReAct loops, multi-turn conversations, a shared system prompt — prefix caching alone pays for running local inference.

TTFT and long context

- Short prompt TTFT: 54 ms p50 (excellent)

- 4K prompt TTFT: 62 ms p50

- 8K prefill: 73 ms

- 32K prefill: 10.1 seconds

- 100K prefill: 78 seconds

Long-context prefill is the one real weakness. It's compute-bound on the MoE GEMM kernel — not bandwidth-bound. At 131K context you're paying 80+ seconds of prefill time every cold turn. If you need long-context frequently, design your agents to reuse prefixes so the cache does the heavy lifting.

Tool calling

Works out of the box with --enable-auto-tool-choice --tool-call-parser gemma4. No meaningful overhead versus text-only completion — the model just emits shorter, structured output when it decides to call a tool.

Trying to Push Throughput Further

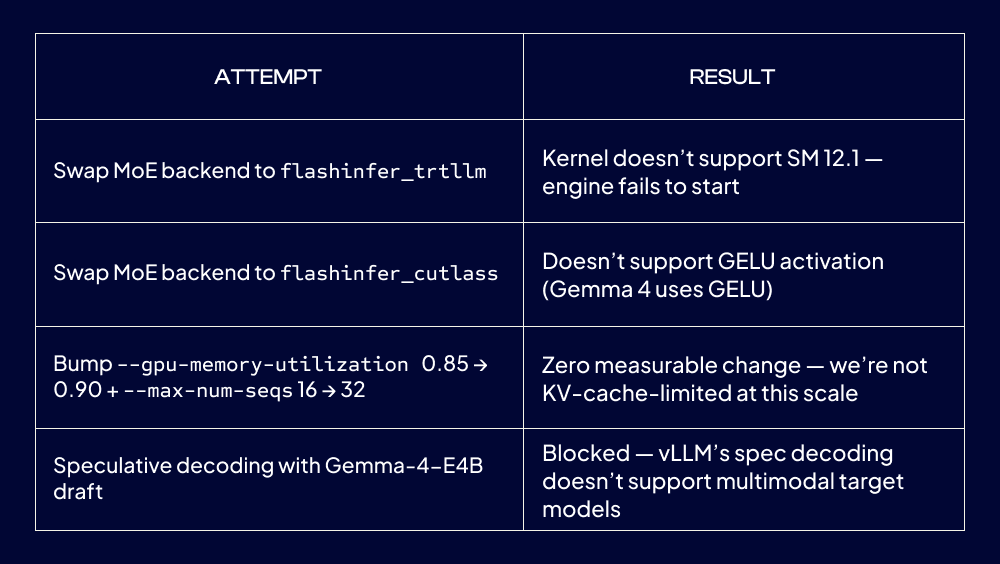

With the baseline measured, we tried four paths to push single-stream throughput higher. All four hit walls:

The last one hurt. Spec decoding was the one real lever: a small 4B draft model predicting tokens for the 26B target can deliver 1.5-2× throughput on repetitive outputs. But vLLM explicitly rejects it for multimodal models right now, and Gemma 4 is multimodal. The two features we most wanted — speculative decoding and image input — are mutually exclusive in today's vLLM.

Conclusion: the baseline config is already at the hardware + software ceiling. Generation throughput is memory-bandwidth-bound. Prefill is compute-bound on the only NVFP4 MoE kernel that works on GB10 + Gemma 4 (Marlin). There's no software knob we haven't turned.

What Would Change the Picture

Future improvements we'll re-test when they land:

- vLLM adds multimodal spec decoding — unlocks Gemma-4-E4B as draft, expected 1.5-2×

- FlashInfer adds SM 12.1 + GELU support — alternative MoE backend, could improve prefill

- Community ships a clean Gemma 4 AWQ INT4 quant — Qwen AWQ hit 82 tok/s on the same hardware

- NVIDIA publishes an official NVFP4 Gemma 4 build — likely has better-tuned kernels

Plan: re-run the benchmark suite (checked in at projects/dgx-spark-benchmarks/bench.py) every couple of months and see if the landscape has shifted.

What's Next for Us

- Multimodal testing — Gemma 4 accepts image inputs. Document parsing and UI automation are the obvious use cases.

- Local speech-to-text —

faster-whisperon the Grace CPU cores, leaving the GPU fully dedicated to Gemma 4. Arm published a playbook for exactly this split on the Spark. - Agent workload benchmarks — beyond synthetic throughput, measure actual tool-call accuracy and reasoning quality on our real XRPL workflows.

Resources

- Gemma 4 on HuggingFace

- bg-digitalservices/Gemma-4-26B-A4B-it-NVFP4

- vLLM Gemma 4 recipe

- Our original DGX Spark setup post

- Full setup guide with troubleshooting

Try It Yourself

If you have a DGX Spark and want to run Gemma 4: pull the vllm/vllm-openai:gemma4-cu130 image, get a working gemma4_patched.py, and use the docker run command above. Allow ~20 minutes for the first boot. Reach out at XRPL Commons if you hit issues — we've documented most of the sharp edges.